The structure we already built

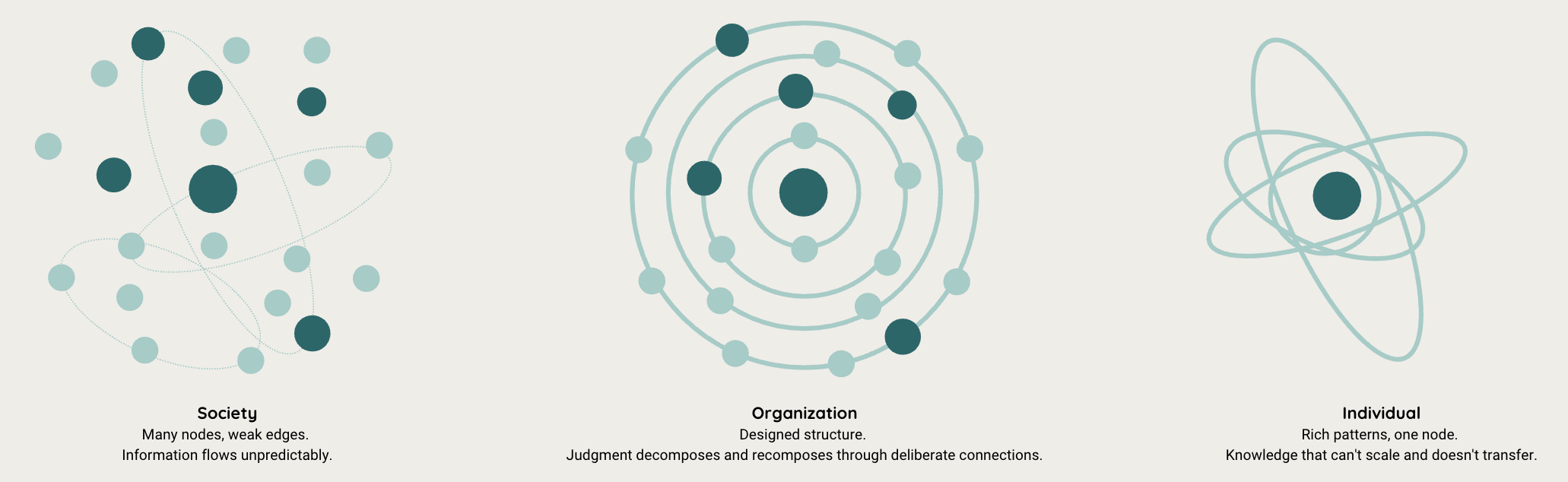

Organizations are the best mechanism humans have found for working with truly complex problems. Not the solo genius, because no single person can hold the full picture of a financial system, a clinical trial, or a regulatory regime. Not society as a whole, which has too many competing needs and objectives to converge on the kind of judgment these problems demand. The answer has been something in between: a group with a clear goal that no individual can achieve alone, with specializations that support that goal, and, critically, with information flowing between them to ensure the outcomes hold up.

What makes these organizations distinct from other kinds of work is what they're actually doing. While precision engineering allowed manufacturing to scale by making parts as interchangeable as possible, and factories were built to follow that same logic, the problems that live in the realm of expert judgment have stubbornly resisted the same treatment. They defy automation and standardization because the work itself requires adaptability, ingenuity, and the kind of pattern recognition that only develops through sustained exposure to real cases. We've found ways to scale the entry point through certification programs, university training, the modern descendants of the guild. But the practice itself can't be taught in a book, in firmwide guidance, or in manuals. Because this isn't the same kind of cognitive work as building a thing. It's the work of observing a thing, finding evidence, determining what might be happening, and issuing a judgment that others will rely on.

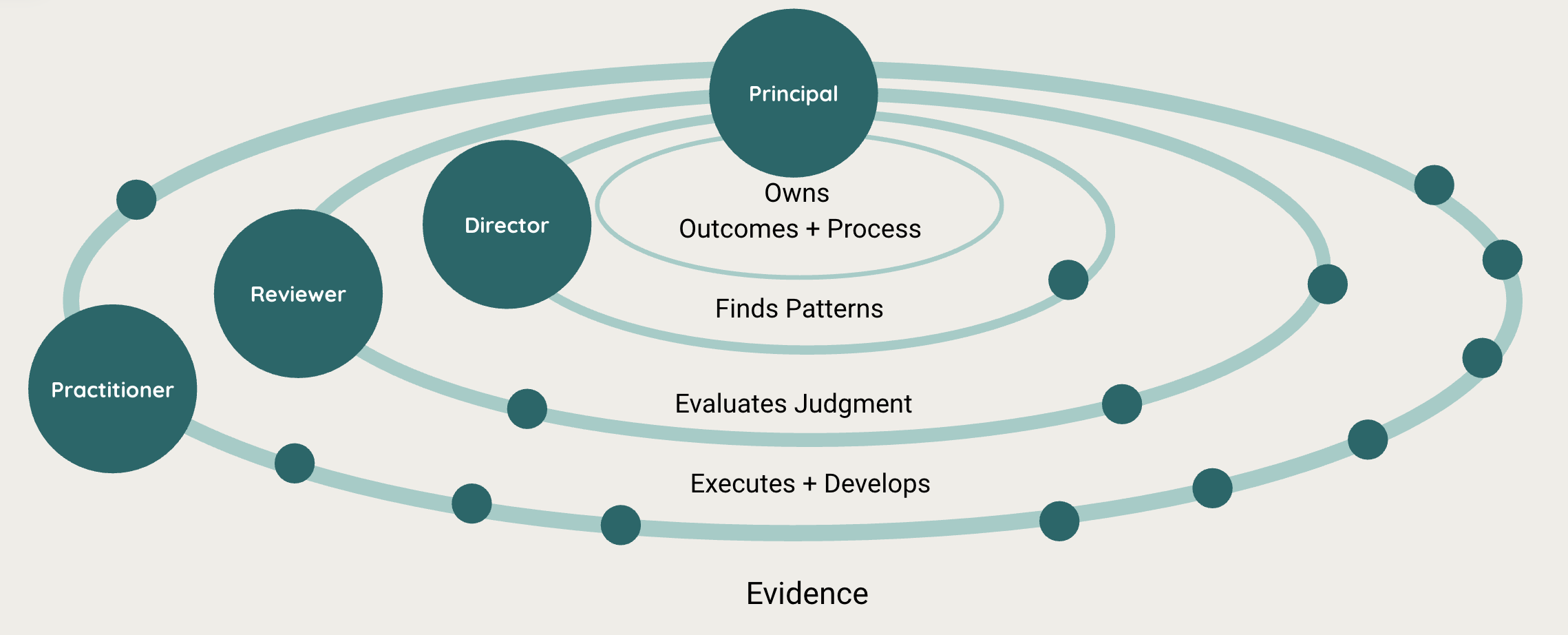

The organizations built around this kind of work (audit firms, clinical trial teams, legal practices, regulatory bodies) are fundamentally different from factories. In a factory, the intelligence lives in the process: standardize the handoffs, make the workers interchangeable, and the output stays the same. In an evaluation hierarchy, the intelligence lives in the connections between people. The edges between nodes are more amorphous than the nodes themselves, because what flows through them isn't output. It's judgment, reasoning, calibration, and learning. Herbert Simon called this a near-decomposable system: the architecture that complex systems use to manage information across scales. Elliott Jaques extended the insight by showing that each level in such a hierarchy requires a qualitatively different kind of thinking, operating at a different time horizon. The practitioner handles what's in front of them today. The reviewer evaluates whether the right judgment was applied. The director watches for patterns across workstreams. And the principal holds the widest scope of all, not just the outcome, but the health of the process that produced it.

None of this was ever perfect. These organizations know that even with many people and carefully designed processes, it's impossible to review 100% of the evidence. So they assess risk, focus their people where evidence is most critical, and concentrate attention in those spots. The hierarchy has always been a compromise, the best available solution to an impossible problem. But it was designed. And it worked, not because the people in it were infallible, but because the structure itself carried information in ways that no individual could.

What's breaking

We keep making the same mistake.

The pattern is familiar by now: target efficiency gains at the lowest level of the hierarchy. Concentrate specializations into shared services. Keep the expensive experts for the engagements where they're really needed and deploy them more agilely. It sounds rational every time. And every time, it breaks the same thing: the information flow between the people doing the work and the people evaluating it.

This is what happened with outsourcing. Firms took the foundational evidence-gathering work, the least cognitively demanding layer and the highest-leverage target for cost reduction, and moved it to shared service centers. The output looked fine. The deliverables arrived on time. But the engagement team, where information had flowed freely between the practitioner and the reviewer, was broken apart. The reviewer could still check whether the numbers tied. What they couldn't do was evaluate whether the judgment behind those numbers was sound, because there was no judgment anymore. There was output.

The results showed up in the data. Big Four audit deficiency rates doubled between 2020 and 2022, climbing from 12% to 26%. Mid-tier firms fared worse, with deficiency rates above 60%. The PCAOB's own interviews with partners across the profession surfaced the concern directly. One audit partner put it plainly: "Our staff now will never see cash testing, as it is done offshore. We are going to see the impact of that when they are managers." The profession heard the warning. It continued anyway, because the economic incentives pointed toward standardization even as the quality data pointed away.

Then came RPA, robotic process automation, promising to automate the routine steps within the practice itself. And again, we didn't see the efficiency gains that were promised, because the bottleneck was never the mechanical work. The bottleneck was the information that needed to flow from the lowest level up through the hierarchy, and that information had never been carefully designed. It traveled implicitly, through conversation, through the questions a reviewer asked during a review meeting, through the hesitations and anomalies a practitioner noticed and mentioned in passing. None of that was captured in the standardized handoff. None of it survived the automation.

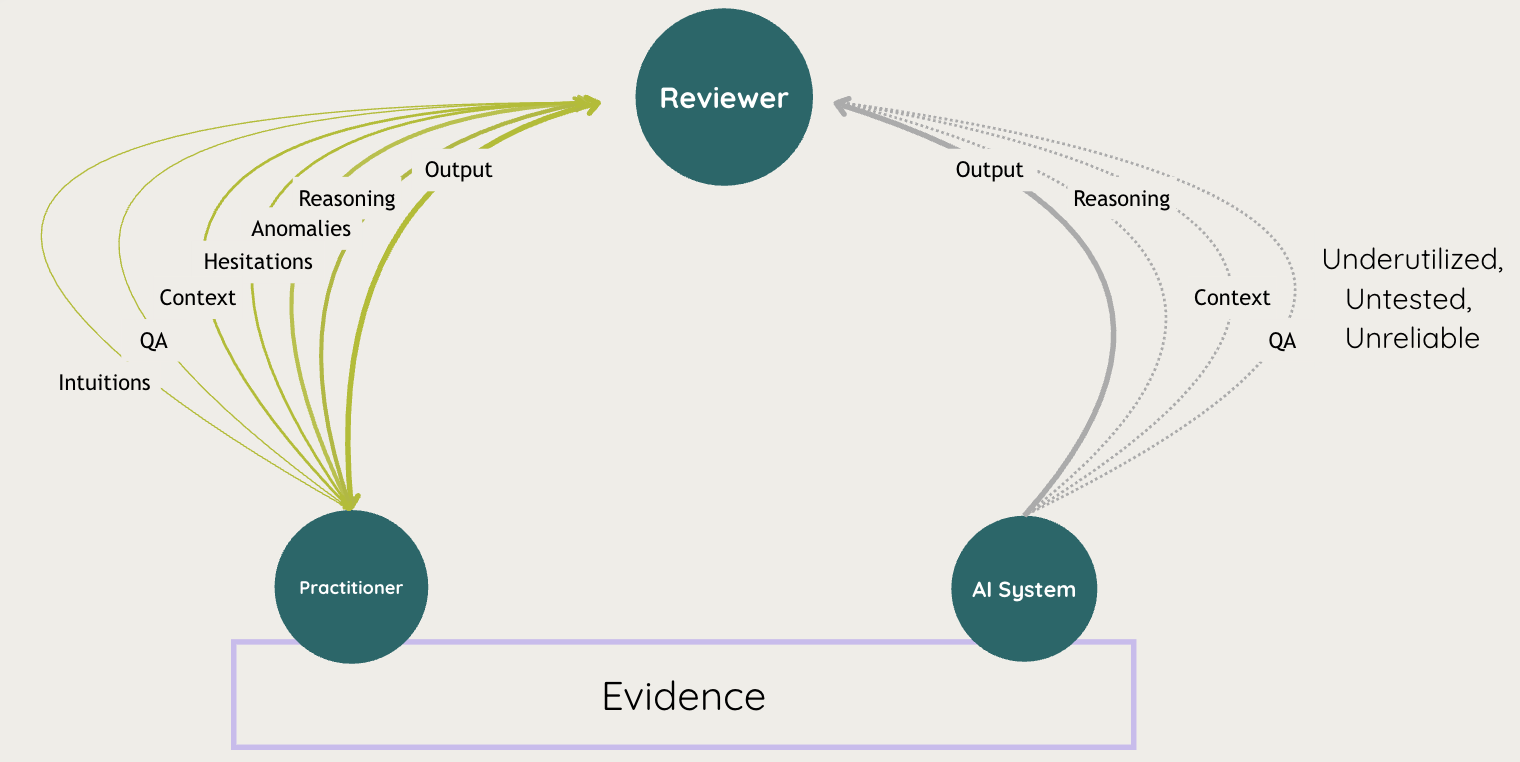

And now, with AI, we are following the same pattern, but it's more pervasive than before. AI isn't just being introduced at the bottom of the hierarchy. It's being introduced at every level simultaneously, alongside the humans who are still there, with zero design thinking about how information flows between them. The humans haven't been removed. They've been given tools that produce confident, clean output while stripping away the implicit signals that the hierarchy was built to carry. Engagement teams that have already been hollowed out by a decade of outsourcing and automation are now expected to integrate AI at every layer without anyone having designed how the combined human-AI node should communicate with the nodes around it.

A 2026 survey of 1,200 executives by Economist Enterprise confirms the gap. Fewer than two in five firms continue any form of oversight after an AI system goes live. Nearly nine in ten CTOs say their AI rollout is on track; only three in four VPs, the people actually running the work, agree. The people closest to the operations see the problem. The people setting strategy don't. And the reason they don't is that the observation layer, the thing that would tell them whether the information architecture is still functioning, doesn't exist.

The missing scale

There is an enormous amount of concern right now about what AI does to individual cognition. Whether expertise atrophies when we externalize thinking. Whether junior professionals will ever develop the pattern recognition that their seniors built through years of practice. Antony Slumbers calls it the breaking of the pyramid: firms cut the junior tier, harvest short-term productivity, and discover a decade later that no one learned how to do the work. The Centaurian AI group frames it differently: extend the individual's cognition, build a System 0 that structures your thinking before you ever touch the tool. Both are serious responses to a real problem. And at the other end of the scale, the societal concerns are just as urgent: workforce displacement, concentration of power, the erosion of institutions that democratic society depends on.

But I think what people are really worried about, underneath all of this, is that the organizations and institutions that have done the critical work of understanding and governing the complex systems of modern society (healthcare, finance, legal, government) will fall apart. The individual-scale fear and the societal-scale fear both point to the same place. They just don't name it.

What both conversations miss is this. At the individual level, yes, externalizing cognition poses risks. But organizations have always absorbed changes to individual nodes, whether new hires, retirements, or reorganizations, as long as information continued to flow through the structure. That's what the hierarchy was designed to do. The question was never about any single person. It was always about the connections. And at the societal level, the workforce concerns and institutional fragility that people point to are ultimately concerns about these organizations, the ones that do the difficult, consequential work of figuring things out on behalf of the rest of us.

The missing scale is the organizational one. And this is where cognitive science has something to offer. Herbert Simon didn't study organizations because he was interested in management. He studied them because they externalize the information structures we can't see inside a single mind. They make the flow of judgment across people visible, designable, and improvable. For decades, researchers in his tradition have studied how information moves through structured systems under constraints. Organizations are the richest laboratory for that work, because the constraints aren't biological. They're designed.

And here is what makes this moment different from outsourcing, different from RPA, different from every previous attempt to automate these organizations. AI is, at its core, an information technology. Information flows through it freely, in ways that others can understand and build on. Unlike a shared service center that sends back a spreadsheet, unlike an RPA bot that executes a rule, an AI system can carry reasoning, surface context, flag anomalies, and communicate in natural language across every level of the hierarchy simultaneously.

We have, for the first time, a technology that is natively good at exactly the thing these organizations have always struggled with: moving rich information between people at different levels, at the appropriate level of abstraction for each time horizon. A practitioner's observations could flow to the reviewer as detailed judgment, to the director as a pattern across workstreams, and to the principal as a signal about the health of the process, at the same time, at all scales.

But we're not designing for that. We're so focused on the nodes, which ones to replace, which ones to preserve, which ones to augment, that we're missing the edges entirely. We're using the most powerful information technology we've ever had to do the same thing outsourcing did: standardize the bottom of the hierarchy and hope the top figures it out.

The question nobody is asking

The debate right now is stuck between two positions: preserve the apprenticeship model, or automate the work and move on. But this is a false choice. The apprenticeship model was always inefficient, hundreds of hours of mechanical work to produce a handful of moments where judgment actually developed. A practitioner working alongside AI doesn't have to spend two hundred hours on cash testing, work that everyone in the profession knows is grueling and that most leave before they absorb what it was teaching them, to encounter five moments where something doesn't feel right. And automation without design simply strips out the information that made the hierarchy function. Neither option takes seriously what this technology actually is.

The question we should be asking is not whether to replace the nodes or preserve them. It's whether we can enrich them, every node, at every level, with more information than any of them had access to before.

And the information can flow in both directions, and across levels, far more efficiently than it ever could through purely human channels. A reviewer doesn't just receive output from below. They receive reasoning, context, confidence signals. A director sees anomaly patterns across workstreams in real time, and a principal gets a live signal about the health of the process itself, at the time horizon that matches their responsibility.

Every node gets richer information. Every edge carries more signal. The hierarchy doesn't flatten. It intensifies.

And if we go further: if the hierarchy originally arose from human cognitive constraints (bounded rationality, limited working memory, limited channel capacity between individuals) and if AI fundamentally changes those constraints, then the shape of the hierarchy itself may change. Not because we cut layers to save money, as outsourcing did. But because the information architecture no longer requires as many layers to achieve the same quality of judgment. Fewer layers, but richer edges. Every node a human-AI partnership. The hierarchy becomes flatter not by default but by design, because the constraints that gave it its shape have shifted. Erik Brynjolfsson has shown that the productivity gains from general-purpose technologies only materialize after firms redesign their organizations around them. The redesign we're describing is the one that hasn't happened yet.

And this is where work like the Centaurian AI group's becomes foundational. Getting the specialist-plus-AI partnership right at the individual level, understanding how a single expert thinks and works alongside these systems, is essential groundwork. The organizational design we're describing here depends on each node functioning well. But the node alone isn't enough. The design has to extend to the edges between them.

This is, at its core, an information-theoretic problem. Claude Shannon's foundational insight was that every channel has a capacity, a maximum rate at which information can be transmitted reliably. The hierarchy has always been a compression algorithm for judgment: each level compresses the work below it into something the level above can process, within the cognitive limits of the people at each node. That compression was necessarily lossy, and it was designed for human-only channels. But if AI changes the bandwidth of every edge, if each node can now process richer information than before, then we have the opportunity to redesign those compression algorithms entirely. We can preserve signal that was previously discarded out of necessity. We can carry reasoning, not just conclusions. We can make the hierarchy's judgment more trustworthy, not less, than it was before AI arrived.

But none of this happens by default. It has to be designed into the relationship between the human and the system, deliberately, structurally, at the organizational level. The question is not whether a junior auditor should do cash testing or whether an AI should do it instead. The question is whether we can design a system where that junior develops the pattern recognition that cash testing used to build, faster, at higher density, through a system that engages them at the judgment layer rather than the mechanical one. That's not a technology question. It's not an individual cognition question. It's an organizational design question.

The irony

Here is the irony. We've been talking about supercharging the information flowing through every edge of the hierarchy, and the technology to do it is already generating exactly the signals we need. Every interaction between a human and an AI system produces data: what was asked, what was returned, what was accepted, what was corrected, what was ignored. The conversations between levels that used to happen in a meeting room and vanish could now be captured, structured, and fed back into the system. The director's calibration, the reviewer's correction, the practitioner's hesitation, all of it could be observable in ways that were never possible when the hierarchy ran on purely human channels. But as we introduce these tools, we are doing almost nothing to capture and monitor how they are being used, what the interactions look like, and how those insights flow through the organization.

There are a few of us working on this, and what we're seeing in production confirms what this piece argues: the signals are there, they're rich, and when you instrument the organizational layer, the whole structure starts to become visible in ways it never was before.

The humans operating within these hierarchies are not separate from the system. They are the system. They cannot be reduced to reviewers of automated output. They need to become the signal, the source of adaptation, correction, and judgment that makes the whole structure more trustworthy over time. The most valuable output isn't the work product. It's the information about how the work gets done. The unit of design is not the individual, and it is not the agentic system. It is the organization.

The hierarchy was always a compromise. For the first time, we have the technology to make it better than it was. Not by fearing its destruction, but by designing what comes next. How many layers? How many constraints? We can't know without careful experimentation and design.

Citations

- Claude Shannon, "A Mathematical Theory of Communication" (1948)

- Herbert Simon, "The Architecture of Complexity" (1962)

- Elliott Jaques, "In Praise of Hierarchy" (1990)

- Erik Brynjolfsson, Daniel Rock, and Chad Syverson, "The Productivity J-Curve: How Intangibles Complement General Purpose Technologies" (2021)

- Centaurian AI, "Thinking with Machines" (2024)

- PCAOB, "Insights on Culture and Audit Quality" (2024)

- Antony Slumbers, "The Pyramid Has Already Broken" (2026)

- Economist Enterprise, "Making AI Deliver" (2026)

Marisa Ferrara Boston holds a PhD in Cognitive Science from Cornell University and has spent her career building systems for expert augmentation and machine adaptation in hierarchical knowledge organizations.