Enterprise AI is being deployed into expert organizations as if the work were a collection of tasks.

Extract the document. Summarize the memo. Review the contract. Test the control. Produce the output faster.

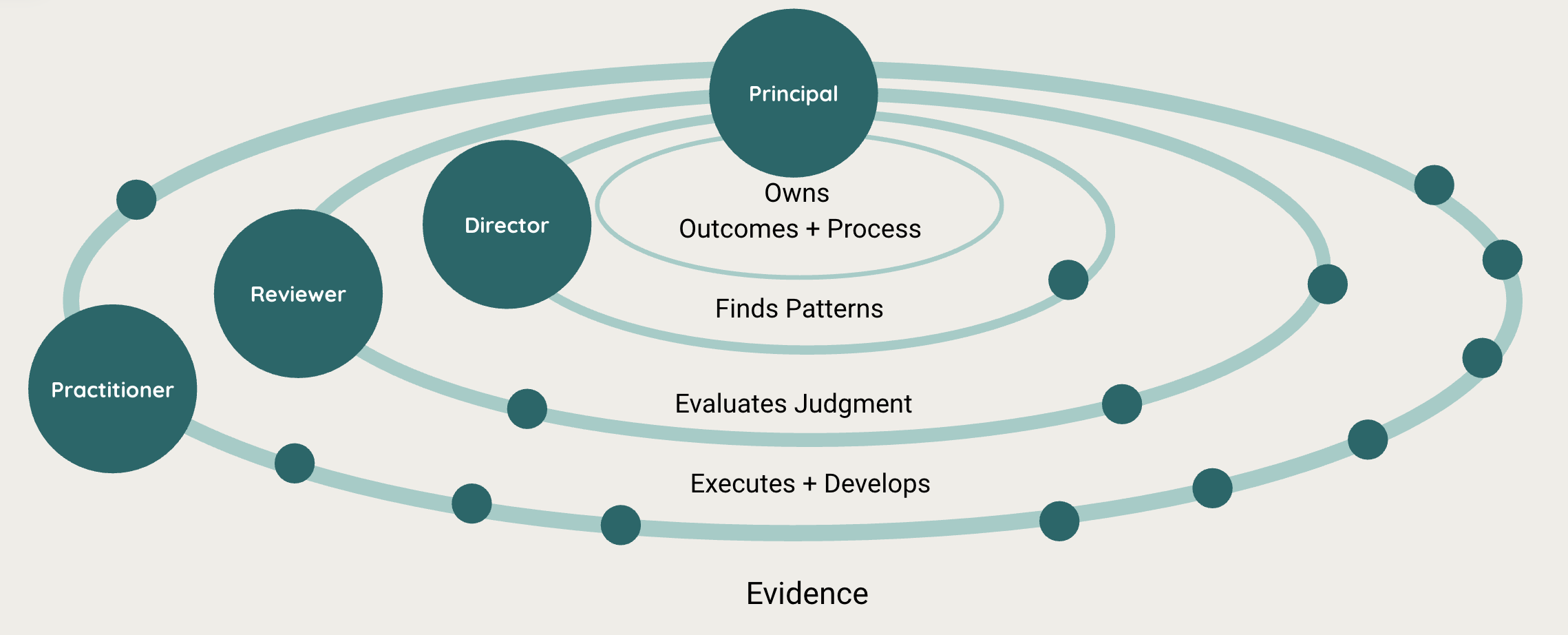

But the organizations that do audit, legal, regulatory, clinical, and financial judgment do not work because tasks are completed efficiently. They work because judgment moves through a structure. A practitioner notices something. A reviewer asks whether the judgment holds. A director sees a pattern across workstreams. A principal watches the health of the process itself.

The work product matters, of course. But the organization depends on something harder to see: the flow of observations, doubts, exceptions, corrections, and calibration between people.

And somehow, that is the part we keep managing to break.

Outsourcing broke it by separating the people doing the work from the people responsible for evaluating it. RPA broke it by automating routine steps without capturing the judgment that surrounded them. AI could break it again, at much larger scale, by producing cleaner outputs while stripping away the signals that expert hierarchies need to function.

I do not think that is the only possible path.

AI could help us redesign the organization itself: not by replacing individual professionals, and not by preserving every old layer of the hierarchy, but by improving how information, judgment, and correction move through the system.

The unit of design is not the individual, and it is not the agentic system. It is the organization.

Expert organizations are not factories

Organizations are the best mechanism humans have found for working on problems that no individual can fully hold alone.

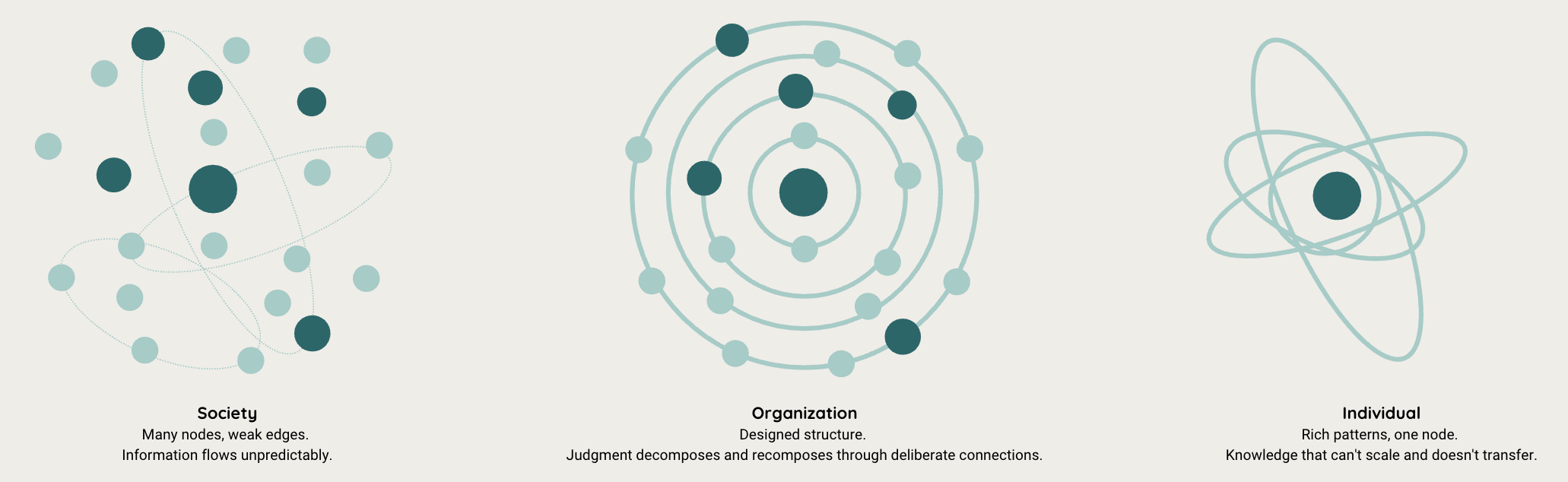

No single person can see the whole of a financial system, a clinical trial, a regulatory regime, or a complex legal matter. But society as a whole is too diffuse to make the specific judgments these problems require. So we built something in between: organizations with shared goals, specialized roles, and structures for moving information between people.

That structure matters because expert work is not factory work.

In a factory, intelligence can be embedded in the process. Standardize the parts, standardize the handoffs, make the work repeatable, and the output becomes more reliable. The goal is to reduce variation.

Expert organizations work differently. Their hardest problems resist full standardization because the work depends on observing messy evidence, recognizing patterns, weighing exceptions, and making judgments that others will rely on. A good audit, clinical review, investigation, or legal analysis is not just the sum of completed tasks. It is the result of many partial observations being tested, challenged, corrected, and recomposed into a judgment that can hold.

That is why hierarchy exists.

It is easy to see hierarchy only as authority, especially because organizations often misuse it that way. But in expert organizations, hierarchy also serves a cognitive purpose. In Elliott Jaques’s terms, each level carries a different scope of judgment and operates over a different time horizon. The practitioner sees what is happening in the case. The reviewer asks whether the judgment holds. The director looks for patterns across workstreams. The principal watches the health of the process itself.

The intelligence is not located in any one person. It lives in the connections between them.

These organizations have never been perfect. They have always been a compromise. No audit team, clinical team, legal team, or regulator can review every piece of evidence with equal depth. So they assess risk. They direct attention. They decide where human judgment matters most. The structure works because information moves through it in ways that no individual could reproduce alone.

That is the part automation has a habit of missing.

The damage was never just automation

Once you see organizations this way, the past twenty years of efficiency programs look different.

The usual story is that firms moved routine work out of expensive expert teams because it looked easier to standardize: evidence gathering, document preparation, reconciliations, first-pass testing, standardized review steps.

But the separation was never as clean as it looked.

Take audit as an example. When foundational testing moved to shared service centers, the output often still arrived. Sometimes it arrived faster. The spreadsheet was complete. The boxes were checked. The file had the expected artifacts.

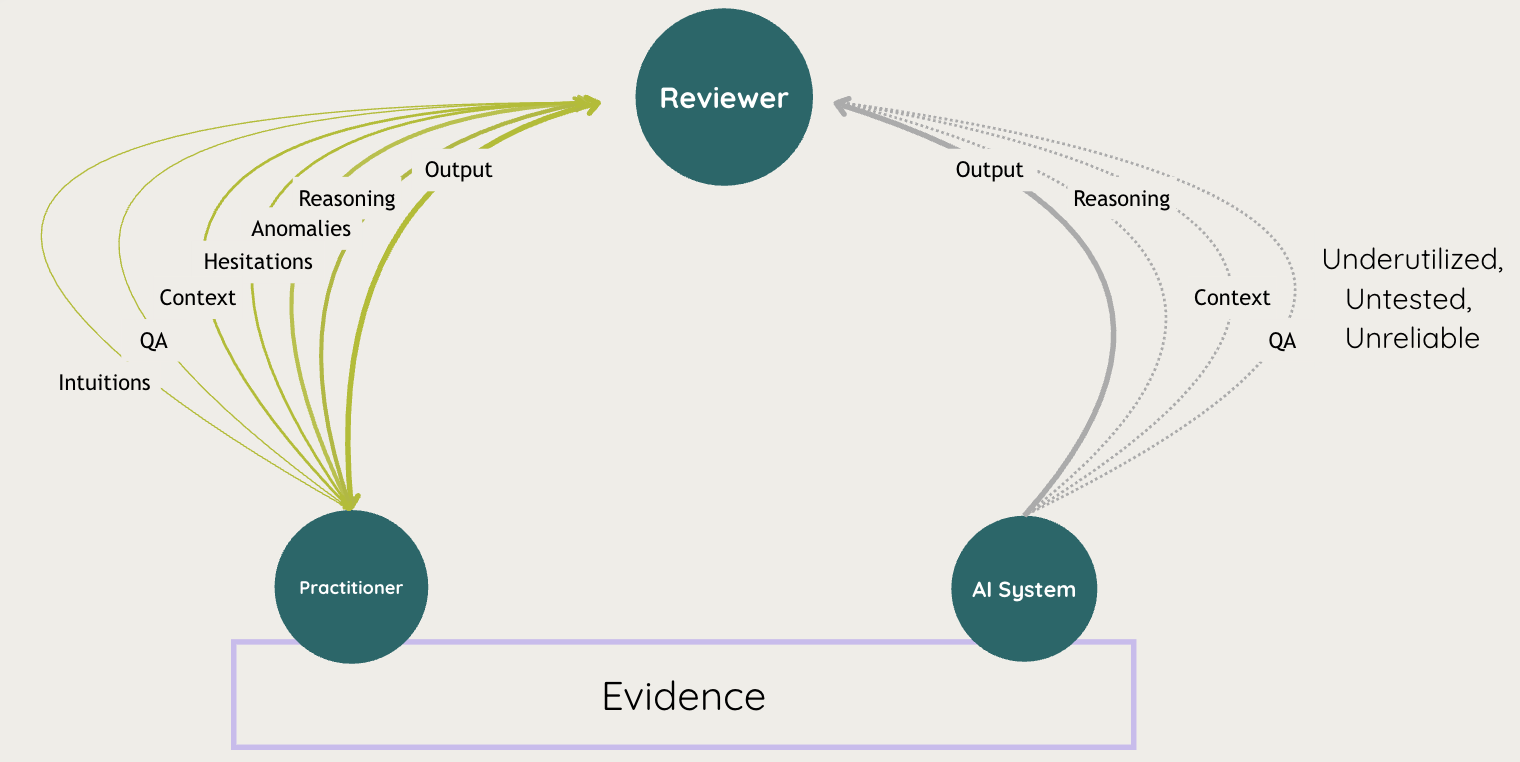

What disappeared was harder to see: the questions, hesitations, anomalies, and small acts of judgment that used to surround the work. A reviewer could still inspect the final output, but they had less visibility into how the work had been done, what had seemed strange, where the practitioner had been uncertain, and what patterns were starting to emerge.

The problem was not simply that junior people lost practice, although they did. The deeper problem was that the organization lost signal.

The PCAOB’s inspection results make that concern difficult to dismiss. Among the Big Four, audit deficiency rates rose from roughly 12% in 2020 to 26% in 2022. Mid-tier firms fared worse, with deficiency rates above 60%. Those numbers do not prove that outsourcing alone caused the problem, but they do show a profession whose quality model is under strain at exactly the moment more work has been standardized, distributed, and pushed away from the engagement team.

One audit partner put the learning problem plainly: “Our staff now will never see cash testing, as it is done offshore. We are going to see the impact of that when they are managers.”

That is true. But it is only part of the story. The quality problem does not begin ten years later, when the apprentice fails to become an expert. It begins immediately, when the hierarchy receives output without the surrounding judgment.

RPA repeated the pattern in a different form. It automated steps inside the process, but the bottleneck was rarely the mechanical step itself. The bottleneck was the meaning around the step: why something was escalated, what exception was ignored, what uncertainty remained, what a human would have noticed but not written down.

The old review meetings, side conversations, and informal corrections were inefficient. They were also carrying information. When organizations replaced or bypassed those interactions without designing a new channel for the signal, they mistook the artifact for the work.

Automation has a habit of doing that.

AI is entering a weakened hierarchy

AI is now being introduced into organizations that have already spent years weakening the channels through which judgment used to travel.

That is what makes this moment different from outsourcing and RPA. AI is not only being applied to routine work at the bottom of the hierarchy. It is being added everywhere at once: to the practitioner drafting the memo, the reviewer checking the work, the director monitoring the portfolio, and the executive trying to understand whether the whole process is improving or deteriorating.

The risk is not only that AI will make mistakes. Of course it will. The larger risk is that AI will produce clean, confident, plausible output while further compressing the signals the hierarchy needs to function.

A reviewer may receive a better-formatted workpaper with less visibility into what the practitioner noticed. A director may see more dashboards and fewer meaningful explanations of why exceptions are clustering. A principal may hear that productivity has improved while losing sight of whether the organization is still developing judgment, escalating uncertainty, and learning from errors.

This gap is already visible in how organizations talk about AI progress. Economist Enterprise’s 2026 report, Making AI Deliver, surveyed 1,221 executives at large enterprises and found a corporate world full of AI activity but still struggling to turn that activity into durable operational capability. More than four in five executives said their AI programs were beating expectations, yet only about two in five firms formally required teams to track business impact. The same report found a seniority gap in how AI progress is perceived: nearly nine in ten CTOs said their AI rollout was ahead of schedule, while only about three in four senior vice-presidents and vice-presidents agreed.

That difference matters. The people closer to the work are often the first to see when the formal story and the operational reality have started to diverge.

The oversight gap is just as important. The report found that about three in five firms review AI systems during development and before deployment, but fewer than two in five continue that oversight after a system goes live. One in eight firms reviews governance only when something goes wrong. That is exactly backward for expert organizations, where the real test is not whether a system looked acceptable before deployment, but whether it continues to support judgment once it is inside the work.

Most AI governance still treats the system as if it ends at the model boundary. But in expert organizations, the system includes the humans using the model, the reviewers interpreting the output, the managers deciding what to escalate, and the leaders accountable for the process. If we only evaluate the AI’s answer, we miss the larger structure that determines whether the answer can be trusted.

The danger is that we repeat the same pattern with a more powerful technology. We preserve the artifact, accelerate the handoff, and make the output cleaner, while losing even more of the judgment that was supposed to move through the organization.

The intelligence is in the connections

The scale we keep skipping over is the organization.

Most AI conversations move between two levels. At the individual level, people ask what happens to expertise when professionals rely on AI to think, draft, summarize, code, review, or decide. At the societal level, people ask what happens to jobs, institutions, inequality, and power.

Both concerns are real. But the work that connects them happens inside organizations.

Organizations are where individual judgment becomes institutional judgment. They are where a practitioner’s observation becomes a reviewer’s question, where a reviewer’s correction becomes a pattern a director can see, and where those patterns become decisions about risk, staffing, methods, training, and accountability.

This is why Herbert Simon treated organizations as more than management structures. They were systems for coping with bounded rationality: ways of distributing attention, information, and decision-making across people who could not individually hold the whole problem. The organization made complex judgment possible by breaking it apart and recomposing it through structure.

Hierarchy, in this kind of work, is not only command. It organizes different scopes of responsibility. Each level sees something the others cannot, from the evidence in a single case to the health of the process as a whole.

When those connections work, the organization can know more than any individual inside it. When they weaken, the organization becomes less intelligent even if every individual node becomes more productive.

That is the mistake we keep making with AI. We focus on the nodes: which worker can be replaced, which task can be automated, which agent can produce which output. But the organization’s intelligence has always lived in the edges between people. The real design question is what those edges need to carry now.

Richer edges, not replacement nodes

The usual debate about AI in expert work gets stuck between two unsatisfying options: preserve the old apprenticeship model, or automate the work and move on.

I think that framing misses the real design problem.

The apprenticeship model did important work, but it was never especially efficient. Junior professionals often spent hundreds of hours on repetitive tasks in order to encounter a small number of moments where judgment actually developed. They learned by doing the work, but also by noticing exceptions, getting corrected, hearing how reviewers reasoned, and slowly absorbing what mattered.

Automation without design has the opposite problem. It removes the repetition, but often removes the surrounding judgment with it. The person receives the answer, the completed workpaper, the extracted clause, the summarized memo. What disappears is the trail of uncertainty, the reason something was flagged, the alternative interpretation that was considered and rejected, the small correction that would have taught someone what to look for next time.

AI makes a different design possible.

A practitioner working with AI should not simply produce more work faster. They should encounter denser judgment. The system should help them see anomalies, compare cases, ask better questions, and understand why something deserves attention. A reviewer should not simply receive a cleaner artifact. They should receive the reasoning around the artifact: what the practitioner considered, where the AI was uncertain, what changed after review, and what may need to be escalated.

At the director level, AI should make patterns visible across workstreams: recurring exceptions, inconsistent judgments, repeated overrides, brittle procedures, and areas where teams are compensating for process gaps. At the principal level, AI should provide a signal about the health of the process itself: whether judgment is improving, whether uncertainty is being escalated, whether corrections are being absorbed, and whether the organization is learning from its own work.

A flatter hierarchy may eventually be possible, but not for the reason efficiency programs usually assume. The case for fewer layers should not be that people can be cut out. It should be that the information architecture has changed. If each role can work with more context, and each handoff carries more reasoning, the organization may need fewer layers to preserve the same quality of judgment. That is a design question, not a headcount shortcut.

This is the organizational redesign problem that Erik Brynjolfsson, Daniel Rock, and Chad Syverson describe in their work on the productivity J-curve. General-purpose technologies do not produce their full gains when firms simply adopt them. The gains arrive when organizations make the complementary investments that change how work is actually done. For AI in expert organizations, one of those missing investments is the redesign of the judgment channels themselves.

In an organization designed this way, people do not simply move faster. They have more context, and the handoffs between them carry more of the judgment that used to disappear.

This is where work like Centaurian AI’s becomes important. Getting the specialist-plus-AI partnership right at the individual level is foundational. If a lawyer, auditor, clinician, analyst, or executive is going to work with AI, the system has to support how that person actually reasons, notices, questions, and decides.

But the node alone is not enough. The design has to extend to the edges between nodes: what gets passed forward, what gets escalated, what gets corrected, and what the organization learns from the interaction.

The opportunity is not just to supercharge individual experts. The point is to make the organization better at knowing what it knows, noticing what it does not know, and improving from the work it is already doing.

The signals are already there

Claude Shannon’s work on information theory gives us a useful way to see what hierarchy has always been doing. A hierarchy is, in part, a compression system for judgment. Each level reduces the work below into something the level above can process. That compression was necessarily lossy because human channels are limited. Meetings are short. Review notes are partial. Much of what people notice, question, correct, or worry about never gets written down.

AI changes the possible bandwidth of those channels.

It can preserve reasoning, uncertainty, correction, context, and disagreement that used to disappear. It can show not just the final artifact, but how the artifact came to be: what was asked, what evidence was used, what alternatives were considered, what the human accepted, what the human changed, and where the system failed to help.

This is also what high reliability organizations have always understood. In Managing the Unexpected, Karl Weick and Kathleen Sutcliffe argue that reliable organizations do not achieve safety by pretending the world is stable. They achieve it by staying sensitive to operations, noticing weak signals, revising their understanding, and updating context before small anomalies become large failures.

That is the organizational capability AI should strengthen: faster context updating. Not just faster output, but a richer picture of what is happening inside the work.

The signals are already being produced.

Every interaction between a human and an AI system leaves traces: prompts, responses, edits, overrides, escalations, ignored suggestions, repeated corrections, moments of trust, moments of hesitation. These are not just product analytics. In an expert organization, they are evidence about how judgment is moving through the system.

Most organizations are not yet treating those traces as evidence about the work.

They may monitor whether an AI system is accurate, fast, adopted, or cost-effective. Those measures matter. But they do not tell leaders whether the organization is becoming better at the work. They do not show whether uncertainty is being escalated, whether reviewers are correcting the right things, whether teams are learning from errors, or whether AI is quietly narrowing the information that reaches the next level.

This is why post-deployment oversight matters so much. Economist Enterprise found that fewer than two in five firms continue oversight after an AI system goes live, even though live operation is where models drift, data shifts, edge cases multiply, and people begin adapting their work around the system. For expert organizations, that is exactly the point when the most important information begins to appear.

The humans operating inside these systems are not outside the AI system, merely reviewing its output. They are part of the system. Their questions, corrections, refusals, escalations, and workarounds are the mechanism by which the organization learns.

For AI in expert work, monitoring cannot stop at model behavior.

The goal is not only to detect when an AI system produces a bad answer. It is to understand how the organization responds when the answer is incomplete, uncertain, misleading, or misaligned with the work. Does the practitioner notice? Does the reviewer correct? Does the director see the pattern? Does the principal understand whether the process itself is improving or degrading?

In AI-enabled expert work, the most valuable output is not always the work product. Sometimes it is the information about how the work got done.

For the first time, organizations have a practical way to observe the judgment layer: not perfectly, not automatically, and not without careful design, but far more directly than before. The conversations that used to vanish in meetings, side channels, review notes, and individual memory can become part of the learning system itself.

That is the opportunity AI creates: not just faster or cheaper work, but an organization that can see more clearly how judgment is forming, where it is breaking down, and how it can improve.

Citations

- Claude Shannon, "A Mathematical Theory of Communication" (1948)

- Herbert Simon, "The Architecture of Complexity" (1962)

- Elliott Jaques, "In Praise of Hierarchy" (1990)

- Karl Weick and Kathleen Sutcliffe, Managing the Unexpected: Sustained Performance in a Complex World (2015)

- Erik Brynjolfsson, Daniel Rock, and Chad Syverson, "The Productivity J-Curve: How Intangibles Complement General Purpose Technologies" (2021)

- Centaurian AI, "Thinking with Machines" (2024)

- PCAOB, "Insights on Culture and Audit Quality" (2024)

- Antony Slumbers, "The Pyramid Has Already Broken" (2026)

- Economist Enterprise, "Making AI Deliver" (2026)

Marisa Ferrara Boston holds a PhD in Cognitive Science from Cornell University and has spent her career building systems for expert augmentation and machine adaptation in hierarchical knowledge organizations. Through Reins AI, she works with organizations deploying AI in regulated expert domains to design evaluation, monitoring, and adaptation loops that preserve and strengthen human judgment.